July 2012. A Korean pop star nobody in the West has heard of drops a music video, and the internet goes collectively, spectacularly insane. Psy’s “Gangnam Style” cracks a billion YouTube views, and while everyone is doing the horse dance at office parties and feeling very global about themselves, something far more consequential is quietly metastasizing underneath all that joy. That was the exact moment content discovery stopped being about what you went looking for. It became about what the machine decided to bring to you.

I want to be precise here, because precision matters when the thing you’re diagnosing is invisible by design. This isn’t technophobia. I’m not waving a fist at software. The recommendation engine, this thing humming inside Spotify, YouTube, TikTok, Netflix, inside basically every platform that mediates how culture reaches you, is one of the more elegant engineering achievements of our era. Genuinely. The ability to navigate 100 million songs and surface something that feels like it was waiting specifically for you? That’s remarkable.

The same elegance that personalizes is the elegance that narrows. The same intelligence that finds you exactly what you want is the intelligence that, over time, quietly walls you inside your own preferences, decorates the cell beautifully, and calls it discovery. That’s the thing I want to tear apart. Not whether algorithms work. They work brilliantly. But what they work toward, and what gets destroyed in the process.

IT’S NOT MAGIC. IT’S GEOMETRY.

Take Spotify, because their system is the most documented. Their recommendation engine marries content-based filtering (analyzing audio characteristics: tempo, key, instrumentation) with collaborative filtering (watching listening patterns across millions of users). They also deploy word2vec, a technique borrowed from natural language processing and repurposed for music. Anderson and colleagues described it cleanly: playlists become “documents,” songs within them become “terms,” and the system trains itself to predict what word comes next in the sentence. Songs cluster in a multidimensional vector space. Similar songs appear closer together. The algorithm navigates this space to find you what you’re statistically likely to want next.

That’s not magic. It’s Geometry. And geometry has no interest in whether you’ve ever heard a Bulgarian folk choir.

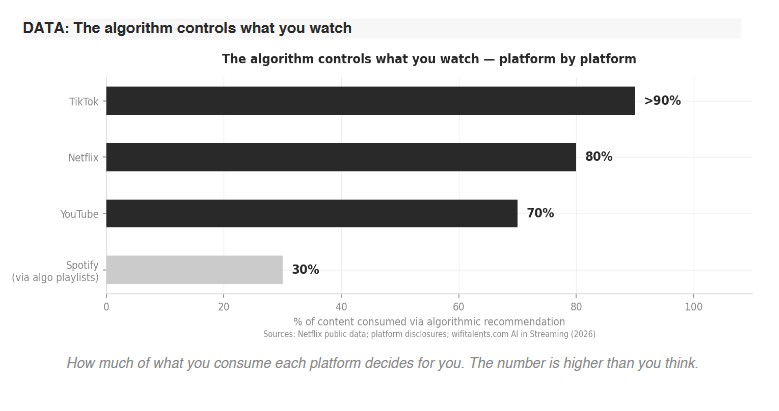

YouTube’s system uses deep neural networks to process watch time, engagement signals, contextual factors, all in service of one metric that, if you strip the language back, is really just: keep the person on the platform longer. TikTok’s For You Page watches your second-by-second viewing behaviour, your dwells, your scrolls, and builds a taste model in real time. It knows what you want before you’ve finished wanting it. Creepy doesn’t begin to cover this, and I say that as someone who also finds it mesmerizing. The mechanics are impressive. The consequences are not.

ACCOUNTING ENTERED THE STUDIO

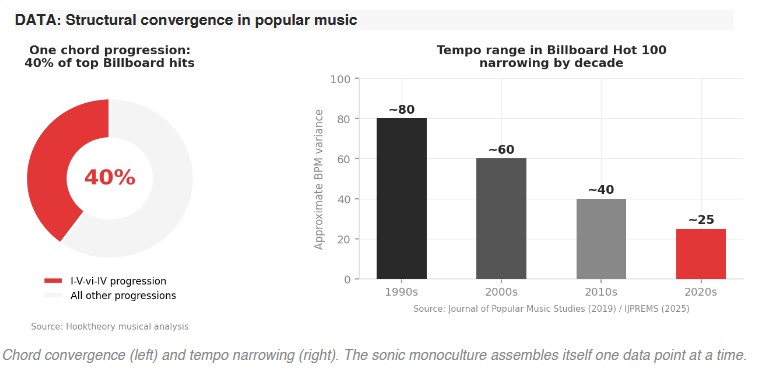

Here’s what happens to music when the algorithm runs the show long enough. Hooktheory, the music analysis company, found that one chord progression — I-V-vi-IV — now appears in nearly 40% of top Billboard hits.

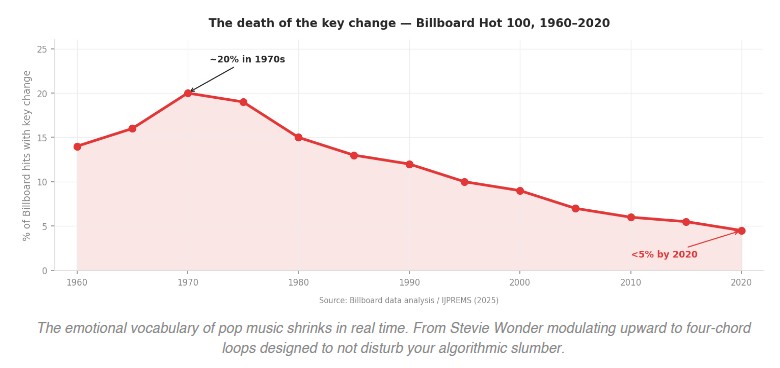

Research published in the Journal of Popular Music Studies found the tempo range of Billboard Hot 100 songs has narrowed dramatically since the 1990s. An analysis of six decades of Billboard data shows key changes (those moments when Stevie Wonder would modulate upward and you’d feel your chest lift involuntarily) declined from around 20% of hits in the 1970s to less than 5% by 2020. Dynamic range has collapsed, in what engineers grimly call the loudness war. Song structures have converged: shorter intros, hooks arriving before the 30-second mark because that’s when a stream officially “counts” for royalty purposes.

Think about what that last one means. The architecture of a song is being designed around a payment threshold. We have allowed accounting to enter the studio and instruct the music.

And nobody decided this. That’s the thing that should disturb us most. There was no meeting where a group of executives said, right, let’s make all music the same. It happened through accumulated micro-decisions, each rational in isolation.

Artists and producers watching what succeeds algorithmically, adapting their choices accordingly. Algorithms recommending music based on similarity to previously successful songs. Success metrics rewarding the familiar with a slight edge of novelty, what researchers Noah Askin and Michael Mauskapf called “optimal distinctiveness”: different enough to stand out, not so different as to confuse the system. The result is what some researchers have started calling algorithmic monoculture. Nobody intended it. Everyone built it.

It’s not a conspiracy. It’s worse. Conspiracies have someone to blame.

THE DISCOVERY TOOL THAT SUPPRESSES DISCOVERY

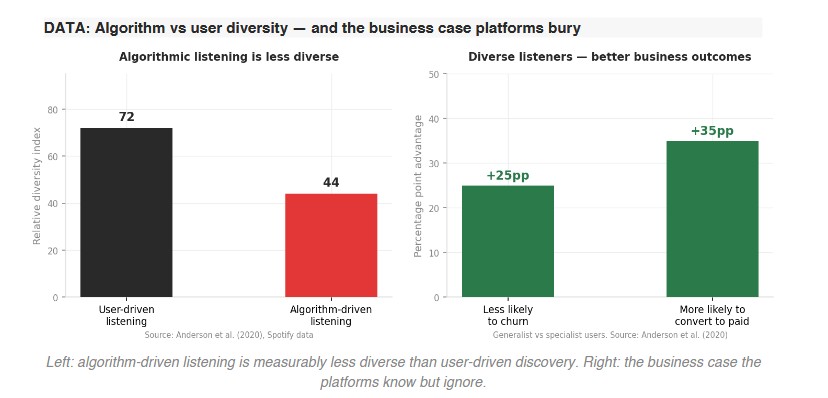

The homophily dynamic is worth sitting with, because it’s the technical name for something you’ve probably felt but couldn’t locate. Homophily: birds of a feather flock together. In recommendation systems, it manifests as a bias toward connecting users with content similar to what they already know. Anderson et al. found something revealing in Spotify’s data: user-driven listening is typically much more diverse than algorithm-driven listening. When you go and find music yourself, you reach wider. When the algorithm feeds you, you eat narrower. Users who became more diverse in their consumption over time tended to do so, the research found, by shifting away from algorithmic consumption and toward organic discovery.

The recommendation engine, which was sold to you as a discovery tool, actively suppresses discovery. The more you rely on it, the more it learns your preferences, and the more it serves your preferences, and the more your preferences calcify. You end up in what Gao and colleagues studying short video platforms called an echo chamber — though that term has been so overused in political contexts that we’ve lost the cultural version, which may be more insidious. A political echo chamber reinforces what you think. A cultural echo chamber narrows who you are.

The mechanism is simple. Selective exposure plus homophily, amplified at scale. The algorithm connects you with content similar to what you consumed, which adjusts your taste slightly toward what you consumed, which adjusts the next recommendation slightly further in that direction. Individually invisible increments. Collectively a cage.

THE RIVER RUNS IN CIRCLES

There’s a finding from research by Mok and colleagues on Spotify that I find genuinely unsettling, partly because it completely inverts the generational story we tell ourselves. Conventional wisdom: young people are musically adventurous, older people retreat into comfort. The data says essentially the opposite. Older users, it turns out, are more likely to discover new content, have more turnover in their listening week to week. Younger users are less exploratory. The researchers characterize it as: younger users are “exploiter-generalists,” older users are “explorer-specialists.”

Translation: younger users consume a diverse range of content but within their existing taste envelope. They’re not really discovering, they’re grazing across a landscape the algorithm has already curated for them. Older users actively push into unfamiliar territory, though within more specialized interests.

The algorithm has trained a generation to confuse variety with exploration. You think you’re discovering because the content keeps changing. But it’s all drawn from the same source. The river looks different every day and it’s running in a circle.

And there’s the exploration burst finding, which is almost poignant: listeners explore in phases. Periods of openness, then retreat into the familiar. An annual spike in discovery around December holidays, then consolidation. We venture out in bursts and come home. The algorithm watches this cycle and knows exactly when to offer the familiar and exactly when you’re in the mood to be surprised. It’s waiting for you. It knows your rhythms better than you do.

FROM ART TO CONTENT

The Gangnam Style moment seeded something beyond its own weirdness. That video established a template (catchy hook, replicable visual gimmick, optimized for algorithmic amplification) that became the grammar of viral content. Every subsequent viral wave borrows from it. Musicologist Nate Sloan describes “the TikTokification of pop music”: songs increasingly built around short, clippable segments designed for social media, with distinctive elements engineered to become meme-worthy. The song is no longer the thing. The song is a delivery mechanism for the clip.

Film and television follow the same logic. Netflix’s data-driven content creation has been critiqued repeatedly for producing work that traces the contours of previous successes. The algorithm that recommends content ends up influencing what content gets made in the first place. We’ve created a feedback loop between consumption data and production decisions, which means the diversity of what gets made is constrained by the diversity of what already existed. Each iteration slightly more like the last.

From distinctive to derivative. From art to content. That’s not a metaphor, it’s a process.

A VALUES QUESTION DRESSED AS A TECHNICAL PROBLEM

I want to be honest about the economics here because the cultural critique only makes sense if you understand why the incentives are so durable. In the attention economy, user engagement is the currency. Recommendation systems are optimized to maximize watch time, stream count, time on platform. This creates what researchers call rich-get-richer feedback loops: content that initially performs gets recommended more, generates more engagement, gets recommended more. Certain things become enormous. Most things become invisible.

Anderson et al. found something interesting and somewhat damning for platforms: higher content diversity among users is strongly associated with better business outcomes. Generalist users, people who listen across genres and explore widely, are up to 25 percentage points less likely to leave the platform, and up to 35 percentage points more likely to convert to paid subscriptions. So the platforms actually know that diversity retains users long-term.

And yet. The algorithms continue to optimize for short-term engagement because that’s what they’re designed to do. Because quarterly metrics don’t care about the user you lost in year three. Because the system that homogenizes is cheaper to build and easier to scale than the system that diversifies. The platforms know that feeding users the same thing eventually leads to churn. They know it. The algorithm doesn’t care.

That’s not incompetence. That’s a values question dressed up as a technical problem.

BABEL TURNS OUT TO BE A FEATURE

There’s a counter-example worth examining. Gao and colleagues studying echo chambers across short video platforms found something unexpected: while Douyin (the Chinese TikTok) and Bilibili showed strong echo chamber effects, the global version of TikTok did not. Their explanation: linguistic and cultural diversity among users makes it structurally harder for the algorithm to form the tightly clustered homogeneous groups that produce echo chambers. When you can’t cluster people easily, because they come from radically different cultural contexts, speak different languages, have incompatible reference points, the filter bubble fails to form.

Which is fascinating and somewhat counterintuitive. Cultural diversity isn’t just an aesthetic value. It’s apparently a technical defense mechanism against the algorithm’s worst tendencies. Babel turns out to be a feature.

TikTok’s For You Page also appears to be architecturally different from most recommendation systems, designed to deliberately introduce content from outside a user’s established preferences. Strategic serendipity. The insight, whether arrived at philosophically or through pure A/B testing, is that sometimes people want to be surprised. Sometimes novelty is the engagement. Sometimes the algorithm should push rather than confirm. Whether TikTok discovered this through ethics or accident, it’s worth noting: breaking the homophily logic occasionally seems to make the product better, not worse.

THE QUIET INSURGENCY

Some users fight back, quietly and without anyone organizing it. Research on Spotify found that users who increased content diversity over time tended to do so by deliberately drifting away from algorithm-driven listening. They seek human curation. Editorial playlists. Community forums. They game the algorithm by strategically engaging with diverse content to broaden what gets recommended back to them. It’s a quiet insurgency, and it’s real.

There are paths forward that don’t require dismantling the system. Diversity-aware recommendation: explicitly optimizing for a balance of similarity and novelty. Counterfactual recommendations that occasionally challenge a user’s established taste to prevent the filter bubble from hardening. Transparent recommendation that lets users understand and adjust the parameters. Hybrid human-algorithmic curation that combines statistical intelligence with editorial judgment.

The technology exists. The research supports the business case. What’s missing is the will to implement changes that might suppress short-term engagement metrics by a few percentage points in exchange for a healthier content ecosystem. That’s not a technical problem. It’s a question of what platforms think they’re actually for.

THE MACHINE LEARNED ME AND I LET IT

I feel the pull of this myself; I want to be honest about that. My playlists are narrower than they were a decade ago, and not because I’ve lost appetite. The algorithm taught me and I let it. There’s a comfort in being known by a system, even a stupid system. Even one that’s slowly making you smaller.

The original promise of the internet, that it would give everyone access to the full range of human creativity, dissolving the gatekeepers, has partially inverted. The gatekeepers are back. They don’t have faces or smoke breaks or off days. They’re mathematical functions optimizing for engagement, and they’re more powerful than any radio DJ who ever existed because they’re personalized to each of you simultaneously, operating at a scale that makes human curation look artisanal.

You can navigate this. You can be deliberate about it. Seek the weird, the peripheral, the things the algorithm hasn’t noticed yet. Curate actively, not just consume. Treat discovery as a practice rather than a passive condition.

Or keep letting the machine feed you. It’ll find you exactly what you like. It’ll find you exactly what you like. It’ll find you exactly what you like.

And one day you’ll look up and wonder when everything started sounding the same.

Sources: Anderson et al. (2020), “Algorithmic Effects on the Diversity of Consumption on Spotify”; Mok et al. (2022), exploration and diversity patterns in streaming; Gao et al. (2023), echo chambers in short video platforms; Hooktheory musical analysis data; Journal of Popular Music Studies (2019).